The Weird Place We've Landed With AI at Work

I want to talk about something that's been bugging me for a while — and I'm pretty sure if you work in tech, you've seen it too.

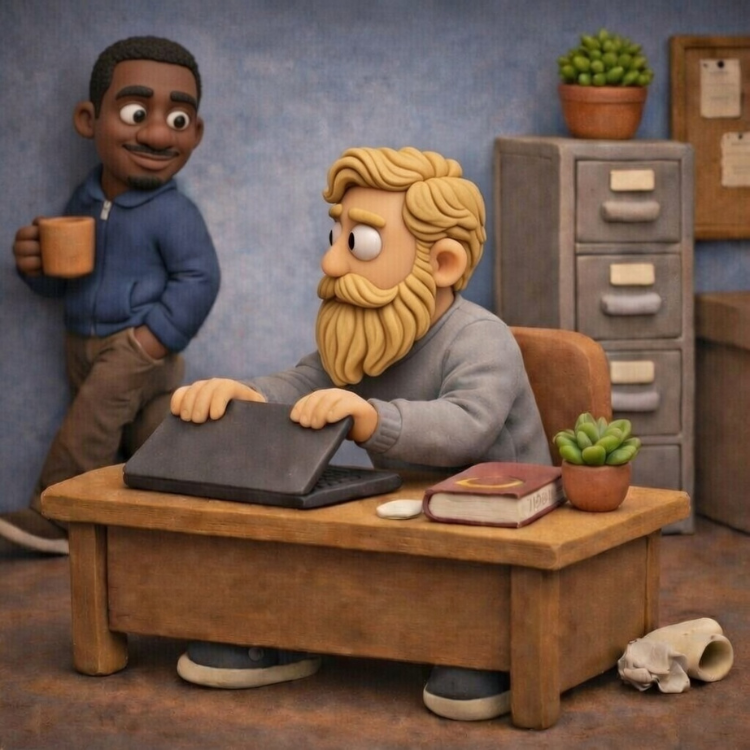

Picture this: someone on your team is heads-down, clearly in a flow state. Typing, reading, nodding slightly to themselves. You walk over to ask a quick question, and in the half-second before you get there — a window minimizes. A tab closes. They sit up a little straighter, suddenly very interested in whatever else is on their screen.

They were using AI. And they didn't want you to see.

I've watched this happen more times than I can count. And every time it does, I feel a little bit of something — not frustration at the person, but frustration at whatever invisible pressure made them feel like they had to hide it in the first place. I guarantee most of the people in that office are doing the exact same thing. They're just better at hiding the tab.

We've created this really strange cultural moment where everyone is using AI tools and almost nobody wants to talk about it openly at a real life level. And I think that's worth examining because it's not doing any of us any good.

Nobody Knows What Side They're Supposed to Be On

Here's what makes this particularly weird. It's not like the old days of a tech debate where there were two clear camps and you knew where people stood. This is something else entirely.

On one side, you've got the people who are almost performatively enthusiastic. AI is going to change everything, it's the greatest productivity tool ever invented, they're posting LinkedIn updates about how they used it to do a week's worth of work in an afternoon. Fine. Maybe true, even.

On the other side, you've got people who feel like they need to publicly distance themselves from it. Like using AI is somehow admitting defeat, or laziness, or a lack of real skill. You hear things like "I prefer to actually think through my problems" or "I want to really understand what I'm writing." Also fine. Also a valid way to work.

But then there's this massive middle ground which I'd argue is where most people actually live. Where you're using it, it's useful, it's sometimes frustrating, it doesn't always get it right, and you don't really feel like making a whole thing out of it either way. You're just... working.

And somehow, that middle ground has become almost impossible to inhabit out loud.

If you're too enthusiastic about AI, some people will quietly wonder if you actually know what you're doing. If you're too critical, you look out of touch. So instead, a lot of people just say nothing. They use their tools privately, keep their opinions to themselves, and perform whatever version of "technically credible" feels safest in the room they're in.

That's exhausting. And it's also kind of absurd.

The Competence Trap

Let me get into the part that bothers me most as an engineering manager, because I think it's the root of a lot of this.

There's an assumption, often unspoken, that using AI means you don't really know how to do something yourself. Like if you asked an AI to help you write a function, you must not actually understand how to write a function. Like the tool is a substitute for knowledge rather than an extension of it.

I've seen this play out in interviews, in code reviews, in casual conversations. The implication is clear: real engineers don't need the assist.

That framing is not just wrong, it's almost exactly backwards.

Think about who actually gets the most out of AI tools. It's not someone who doesn't know what they're doing. A person who doesn't understand the fundamentals can't tell when the AI is confidently wrong. They can't write a prompt that targets the actual problem. They can't take AI-generated code and slot it correctly into a system they understand deeply. They'll ship bugs they don't recognize as bugs. They'll accept architectural suggestions that don't fit the actual constraints of their project.

The people who use AI best are the people who know their craft well enough to guide it, question it, and correct it. The experienced engineers who have written thousands of lines of code by hand, those are the people who can look at AI output and immediately know when something's off. Those are the people who can use the tool to actually move faster without sacrificing quality.

AI in the hands of someone who really knows what they're doing is a force multiplier. That's different from a crutch. And confusing the two is doing real damage to how we think about skill and competence.

What We've Done to Ourselves

I want to be honest about something: I think the tech industry did this to itself, and I say that as someone who's been part of it.

We spent years building up this culture of "10x engineers" and "genius coders" — the mythology of the person who just gets it, who can hold an entire architecture in their head, who writes perfect code fast with no help. That mythology was always a bit of a fiction, but it shaped how people thought about what good engineering looks like.

And now here comes a tool that makes everyone faster, that helps with boilerplate and documentation and research and debugging. And instead of going "great, now we can all focus on the harder problems," a lot of people's first instinct was to treat it as a threat to that identity.

If a tool can help you do something, did you really know how to do it?

Yes. Obviously yes. The tool doesn't know why it's doing what it's doing. You do. The tool doesn't understand your codebase, your users, your constraints, your history. You do. You're the one making the real decisions. The AI is helping you execute them.

A surgeon who uses robotic assistance is still the surgeon. A musician who uses a looper pedal is still the musician. A writer who uses spell check is still the writer. The tool doesn't own the expertise — the person does.

And yet we've convinced ourselves that AI is somehow different. That it crosses some line the other tools didn't. I've thought about why that is, and my best guess is that it feels more cognitive. It's not just automating a physical motion, it's producing something that looks like thinking. That feels threatening in a way that autocomplete didn't, because it's harder to see clearly where the tool ends and the person begins.

But the person is still doing the actual work. The thinking that matters, understanding the problem, defining the constraints, making tradeoffs, deciding what quality looks like, knowing when to trust the output and when to throw it out — none of that is happening inside the AI. That's still entirely you. The AI is just helping you get from thought to execution faster. That's the job of every tool that's ever existed.

What About Actually Learning, Though?

I want to pause here because I think there's a version of the skeptic's argument that deserves one answer, not just a wave of the hand.

The concern isn't just ego or identity. Some people genuinely worry, and I think it's worth taking seriously, that if you use AI to do something before you've really learned to do it yourself, you're skipping the part where the knowledge actually sticks. That you're getting the output without building the mental model. And if you never build the mental model, you'll always be dependent on the tool in a way that limits you.

I think that's a real risk. I've seen it happen. Someone early in their career leans so hard on AI-generated code that they can't explain what it does, can't debug it when it breaks, can't adapt it when the requirements change. That's not because they used AI, it's because they used it as a replacement for understanding rather than an accelerant on top of it.

The difference comes down to intentionality. Are you using the tool to move faster on things you already understand? Or are you using it to avoid the work of understanding something at all? Those are two very different behaviors with very different long-term outcomes, and I think it's worth being honest with yourself about which one you're doing.

There's a version of this that's like using a calculator before you understand arithmetic — you can get the answers, but you don't have the foundation to know if the answers make sense. And there's a version that's like using a calculator once you already understand arithmetic — you save time and focus on the harder problem.

Most experienced engineers are in the second camp. Most of the stigma, though, treats everyone like they're in the first. That's the part I want to push back on. Don't judge the tool by the way an inexperienced person uses it. Judge it by what it does in skilled hands.

The Hiding Is the Real Problem

When people can't be honest about how they're working, teams can't learn from each other. Nobody's sharing the prompts that actually work, the use cases where AI is genuinely helpful versus where it falls flat, the mistakes they've made and what they've learned from them. All of that institutional knowledge just evaporates because the baseline assumption is that you're not supposed to be using the tool at all.

And then there's the more personal cost. When you spend energy managing other people's perception of your tools, you're spending less energy actually thinking about your work. That's a tax on everyone.

I also think it creates a weird dynamic on teams where the people who are quietly using AI and getting more done can't talk about why they're getting more done, so they either look like they're sandbagging when they're not using it, or they look suspiciously productive and nobody can figure out how to replicate it.

None of this is good. Transparency about how we work is how teams get better. And right now, a lot of teams are flying blind on this because it's become too loaded to just... talk about.

What I Actually Want

I'm not writing this to tell you that AI is perfect or that you should use it for everything or that the concerns people have about it are wrong. There are real questions worth discussing, about when to rely on it and when not to, about what skills still need to be developed the old-fashioned way, about the output quality for different kinds of tasks.

Those are genuinely interesting conversations. I'd love to have them.

What I'm pushing back on is the performance of it all. The idea that you need to pick a side, either wave the AI flag loudly or position yourself as someone too serious and skilled to bother with it. The idea that there's something shameful about using a productivity tool that most people in your industry are using.

The most honest version of where I've landed is this: AI tools are useful for some things and not others. They save me time in certain contexts. They've also confidently given me wrong answers, and I've caught those because I know enough to catch them. I use them. I don't think they're magic. I don't think they're a threat to people who know their stuff. I think they're a tool.

And I think most people, if they could just say what's actually true without it meaning something about their identity or their competence, would land somewhere pretty similar.

Let's Actually Talk About It

If you've felt the weirdness I'm describing, the pressure to either evangelize or condemn, the sense that you can't just be neutral about this — I'd genuinely love to hear from you.

Not to debate whether AI is good or bad. But to talk about what it actually looks like to work alongside these tools thoughtfully, with honesty about what works and what doesn't.

Because the people who are going to navigate this moment best aren't the loudest advocates or the loudest critics. They're the ones who are curious, adaptable, and honest about what's actually happening, in their work, and in the industry around them.

That's the conversation I want to be having. And I think a lot of people are ready for it, if we can just get out of the weird place first.